Performance

0

tokens per second

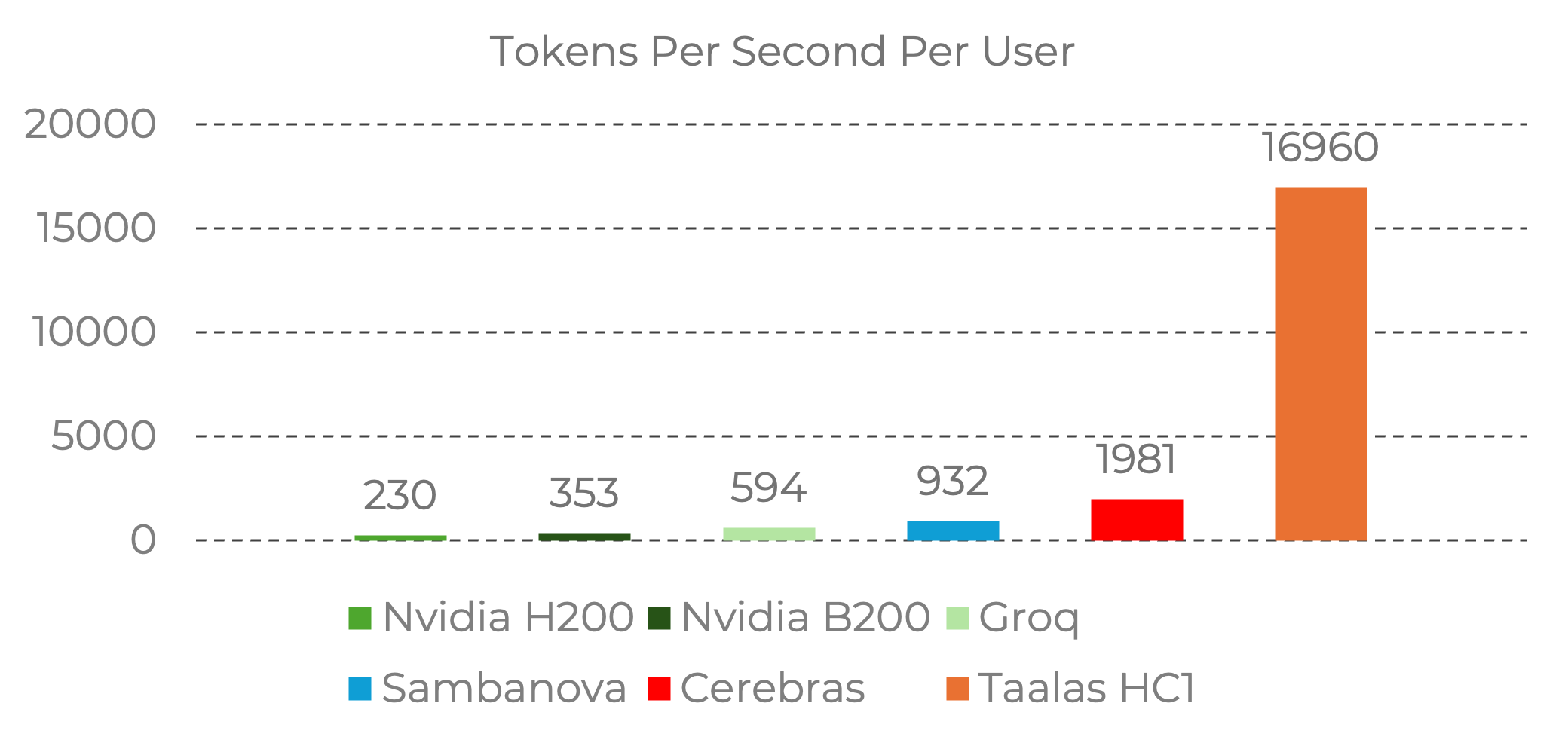

Shft HC1 delivers ultra-fast inference with near-instant response time and extreme efficiency.

Shft HC1

The world's fastest LLM inference chip.

17,000 tokens per second

Per-user throughput with near-instant responses.

Model is the chip

Llama 3.1 8B hardwired directly onto silicon to eliminate the memory wall.

Extreme efficiency

~250W power usage, ~10x less than competing high-end AI chips.

Massive performance lead

~300x faster than standard ChatGPT, ~70x faster than H200, ~9x faster than Cerebras.